AI is pushing into HR on multiple fronts so it’s useful for HR to develop some sophistication in understating its limitations without falling prey to cynicism.

The starting point is to recognize that AI outperforms humans on many tasks, especially if you factor in costs. Google Translate provides a nice case to grasp the strengths and weaknesses of AI. It does a decent job of translating, and it does it for free. It handles many more languages than a human translator, but falls short of humans on subtleties, style and grammar.

The normal strengths and weakness of Google Translate are non-problematic; what is worrisome is that if you type in some nonsense (such as “dog, dog, dog, dog…”) translate it to another language and then back to English it may, in very rare cases, come back with a translation such as “Doomsday Clock is three minutes at twelve. We are experiencing characters and dramatic developments in the world, which indicate that we are increasingly approaching the end times and Jesus’ return.” (A Reddit user first posted about it. Then it went viral.)

∼∼∼∼∼

Artificial intelligence is rapidly being deployed in HR applications. See TLNT’s research report: What Artificial Intelligence Means for Human Resources.

∼∼∼∼∼

The limitation with AI isn’t that it may underperform humans, it’s that we generally don’t understand what it’s doing and that has several consequences:

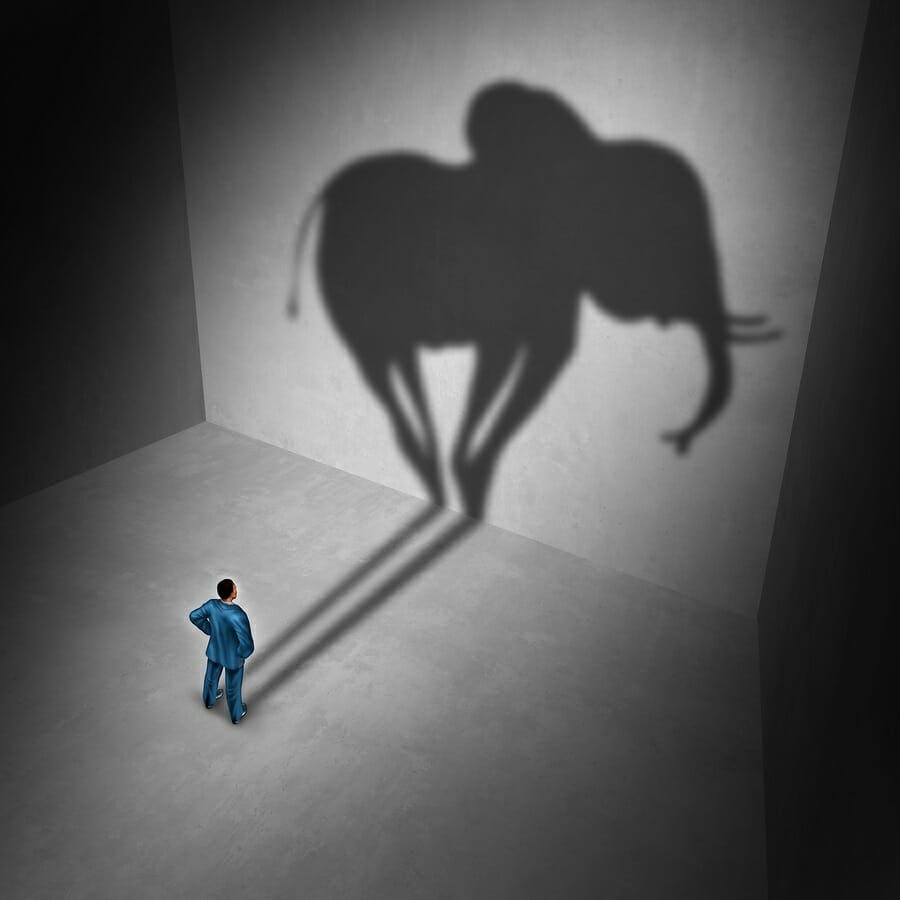

It can make unanticipated errors — The strange translation mentioned above is one example of a totally unanticipated error. Another example is an AI that was good at recognizing pictures of animals such as cats and elephants. Unfortunately, when shown a picture of a cat with the texture of an elephant’s skin, the AI thought it was an elephant. It turns out the AI was overemphasizing texture in identifying the animals. Other tests have shown how automated vehicles, which are generally very good at recognizing stop signs, can be utterly fooled by certain minor changes to the sign. The problem is not just that the AI isn’t perfect, it’s that our intuition about how it might go wrong is poor.

If the situation changes slightly, it could fail totally — An AI may suddenly cease to make useful predictions if some condition changes. For example, a human Go player can adapt to something like a change in the shape of the board, whereas an AI Go player will probably need to start learning again from scratch.

It can be biased against certain groups — Not much needs to be said about bias since HR is so aware of this issue. The difficult issue for HR will be in assessing whether an AI is more or less biased than existing methods.

It can’t explain why it recommends what it recommends — An AI might be very good at predicting which employees should be promoted however if it can’t explain what the reasons are, making it hard for managers to have any confidence in the recommendation.

Humans are never very predictable — The sort of thing HR cares about (who will be a top performer, who will be guilty of misconduct, who to add to a team) are all very hard to predict whether you are using a room full of human experts or a cloud full of AIs. AIs can help but the decisions will remain hit and miss.

The solution to all these issues is to ensure that the outputs of an AI application are regularly audited by humans to ensure there are no serious failures and to ensure we never make any big decisions based on an AI’s output alone.

What is interesting?

- AI continues to achieve amazing things despite its limitations. Check out IBM’s Project Debater or Google’s Duplex to see how smart AI can be.

What is really important?

- HR will be increasingly dealing with AI systems. We need to be aware of the real limitations without using those limitations as an excuse for not adopting beneficial technologies.

- AI researchers are fully aware of the limitations noted in this article and are making progress in mitigating them.

- HR needs to have a process for auditing the output of AIs to ensure they’ve not gone off the rails in some unanticipated way.